If you’ve read other posts we publish on web performance you noticed we are big fans of Cloudflare. The ability to add caching, offload the origin server to lower load and improve server response time, benefit from robust DNS, auto-optimize images with WebP and AMP format, plus the added security of a web application firewall: Cloudflare plans simplify the life of site owners. One of the new features recently introduced is Argo, which provides smart routing, and promises to improve performance one step further. In this post we provide an outline of what Argo does and the results of our test drive.

Users and site owners want a fast Internet

Whether we are on a mobile device or a desktop, when accessing a website one of the basic requirements we expect is for the site to load fast and to respond quickly to our requests. Slow websites frustrate users: they leave before consuming the content, or they consume less than they otherwise would. In addition, the performance of the site leaves a memory: when a site is consistently slow we remember it and tend not to visit it again. But if it’s fast, the opposite.

Since users want a fast Internet and speedy sites, site owners also do. And since it matters to users, search engine have made speed a ranking factor… which in turn can attract more users. Performance can make a huge difference once content quality is there.

Making websites fast

There are many factors that determine the performance of a site: server capacity, proximity to the user, the use of server caching, database cache, CDN, compression, network routes, and the cleanliness of the code that’s executed. Google’s new initiatives on AMP and Facebook’s Instant Article technology are in fact ways to address all the above. AMP is forcing code cleanliness for HTML and Javascript: this alone removes significant bloat from poorly written code that’s no longer allowed to ship. Google won’t take it, so it’s forcing programmers to write clean code.

Cloudflare Argo is a new product that addresses network routes and caching.

What does Cloudflare Argo do?

The internet is a maze, not unlike automobile traffic. As I read about Argo I realized that there is a protocol named BGP, Border Gateway Protocol, which defines how internet traffic is routed, and it is slowly but surely getting out of date as the size of the total network keeps growing. Routes fail, connections are dropped or take a long time. There are different routes for data to travel from point A to point B, and some are faster than others.

Argo is part of a new category of products, like datapath.io, that enable web traffic to travel faster through “smart routes”. Since Cloudflare operates many data centers around the globe and collects traffic information in real time, it can route data faster than the standard paths by knowing which routes are congested and avoiding them. Smart routing directs traffic from your origin server to the user through the fastest route.

In addition Argo claims to increase the amount of cached content to Cloudflare servers, moving more assets a block away from the user without having to travel long distances. Clouflare announced Argo with a blog post that makes a descriptive analogy: Argo is the Waze of the internet.

Pricing and ease of trial

Cloudflare plans go from free to Pro at $20, Business at $200, and Enterprise at $5000 per month. The tiered pricing makes small incremental costs up to Pro plan and goes up 10X from Pro to Business, and 25X from Business to Enterprise. Some features like SSL, page rules and Argo can be purchased separately. I initially asked Cloudflare Support if it would be possible to get a limited time trial for Argo to test the performance benefit, today there are no trials.

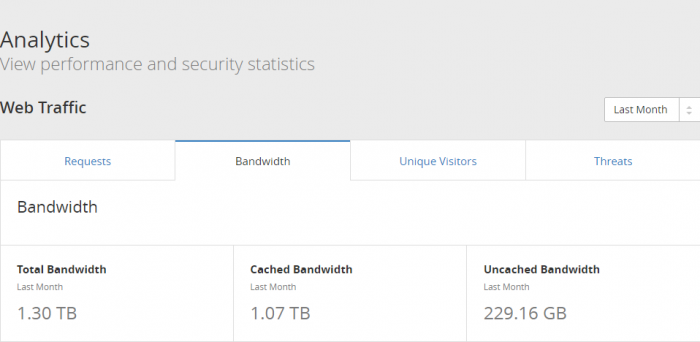

We did a back-of-envelope calculation for how much incremental cost Argo would represent in our case. The site ships 1.3TB per month to run a food blog that contains lots of images. Of this 1.3TB. 1TB are static assets, images already cached in Cloudflare data centers near the user, and approximately 300GB of uncached content that needs to travel from the origin server.

- Base cost of Pro plan: $20

- Argo fixed monthly cost: $5

- Estimated Argo per-GB cost: 1300 * $0.10 = $130

- New estimated monthly cost of Pro plan with Argo enabled: $20 + $5 + $130 = $155

For a 10-day experiment the incremental cost would only be a third of that, so we turned on Argo for 10 days.

Argo setup

The setup of Argo is a breeze. Unlike many performance optimizations there is nothing to change with the origin server. All that a user needs to do is enable Argo in the Cloudflare console. We read the docs, associated costs, and voila: Argo was on. It took under 2 minutes.

Comparing Argo vs. Railgun vs. Cache Everything

There are several options to increase caching and performance. One option we experimented a few times, since our content is mostly static other than comments and user ratings, is the Cache Everything strategy. By setting a custom page rule, site owners can cache a lot more than the standard assets and potentially increase performance, especially time-to-first-byte (TTFB). We saw significant performance gains, however our site serves ads, and for some reason the RPM of those ads decreased when turning Cache Everything on.

The ad network we use believes the plugin needs to place those ads dynamically for each web page and device form factor, and the increase in caching prevented the ads from loading optimally 100% of the time. But for some sites, Cache Everything can make sense.

About Railgun

The next point we looked into was Argo vs. Railgun. At first glance Railgun accelerates dynamic content, and Argo also does. Do these features overlap? Today we are not running Railgun for two reasons: the feature is part of the Business Plan, and requires changes at the origin by adding a memcache instance. We also read that sometimes the setup triggers errors, so on paper this could be interesting, but these hurdles have kept us from giving it a try so far. We asked Cloudflare Support to help us understand how the two differ:

Railgun compresses previously uncacheable web objects up to 99.6% by leveraging techniques similar to those used in the compression of high-quality video. This is used for requests from one of our data centers to your origin web server.

Argo uses tiered caching to reduce requests to your origin, and smart routing to routing visitors through the least congested and most reliable paths using Cloudflare’s private network.

They work well together because Argo’s Smart Routing system will autonomously compute and deploy Smart Routes that it believes will improve performance, Then they revoke those routes if it turns out that they do not improve performance. So if Railgun-direct-to-origin turns out to be faster than Smart Routing-plus-Railgun-to-origin then the system will self-correct and choose the fastest combination of features.

Railgun appears to use compression similar to what H.264 and H.265 codecs do for video, ship the dynamic content in smaller packets, decompress on arrival, gaining time through smaller, pre-cached packets on the origin server. On the other hand, Argo does the job at the routing level, with some incremental caching on Cloudflare’s servers.

Other potential benefits of Argo

Cloudflare mentions that customers may benefit from fewer dropped connections and timeouts. We don’t have New Relic or advanced performance monitoring tools to measure this, so we can’t tell if it’s making a difference or not. Analytics for Argo will be available in a few months. This will help measure the difference with and without Argo in terms of pageviews and traffic gained or lost as a result of smart routing.

Performance/Speed metrics

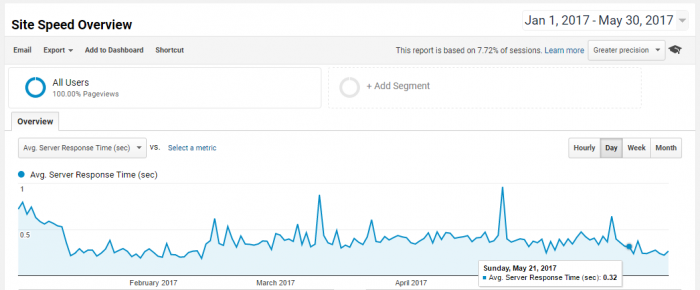

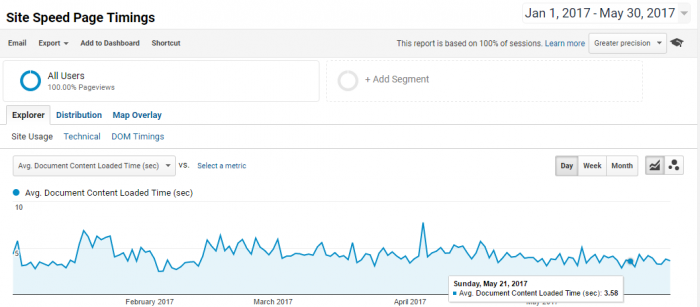

The next sections provide data points of what we saw for the last 10 days. We turned on Argo on the evening of Sunday, May 21 and have ran it through today, May 31. For context, we provide data going back to January 1 to get a sense of daily fluctuations and averages. We share server response time, DOM loading and bounce rate.

Our team does not use total page load time as it mostly depends on ad loading to complete, which run outside of our domain and do not represent “actionable” site performance other than keeping or removing ads. When discussing with Cloudflare support they mentioned their internal metrics showed a 32% increase for our site. They also mentioned that this number is a close equivalent to server response time.

Server response time

In January, you can see that response time improved significantly as we upgraded our origin server. A month later we turned on SSL, which had the unanticipated result of bringing response time back up a little despite now running HTTP2 from a fast origin server. For average, we use March and April’s baseline: 420 ms. With Argo: 280 ms.

Server response time improvement with Argo: (280-420)/420 = 33%. That’s very close to Cloudflare’s internal metric of 32% improvement.

DOM loading time

We use DOM loading as a proxy for the time a webpage loads most content from the origin server for the end user to view it. DOM loaded times exclude external calls like ad requests, but include some external dependencies like Related Posts, in our case loading from JetPack’s servers. What this means is that Argo can’t help make JetPack faster, so this metric may include components that need tweaking outside of Argo. May 14-20 baseline for DOM content loaded time without Argo: 3.68s. May 21-27 with Argo: 3.71s. This metric varies week over week and appears to not be impacted by Argo, likely for the reasons discussed above.

Bounce rate

Since we don’t have detailed monitoring of connection timeouts or other metrics relating to the actual user experience from potential faster loading, we can look at bounce rates. Google and other performance vendors often state that each second a user waits for content to load increases the likelihood of the user leaving the page. While it makes intuitive sense it can be difficult to measure how much each second impacts the user experience. Also bounce rate varies depending on traffic sources, so below are weekly bounce rates for Organic traffic:

- May 14-20 without Argo: 79.50%

- Then, May 21-27 with Argo: 78.15%

- Finally, May 28-30 with Argo: 79.30% (partial week)

Part of the challenge in running a 10-day experiment is that it’s subject to fluctuations. Even if the past couple of days bounce rate is back to the week prior of turning on Argo it is possible that there is some benefit there. 1% to 1.5% bounce rate reduction, if indeed thanks to Argo, would be meaningful.

An entry-level Argo plan?

As we look at performance improvements in these benchmarks, server response time clearly improved. Other metrics, for what we can see, are less convincing. The bounce rate and possibility of bringing dynamic content to the user faster is appealing. Argo is a feature that site owners want to love. But is it worth 5X the cost of a Pro plan? This 5X is based on our estimated usage given we serve many photos per page and may be less for sites with fewer images. But there may be a win-win: if images are already cached to Clouflare’s local data centers they are already a block away from the user.

Why use Waze to travel a block away? Back to bandwidth consumption: today’s Argo plan is a 100% Smart routing plan, including smart routing for nearby, already cached content. Why not use Waze just for what needs to travel from the origin + the incremental cache % that Argo provides? Instead of users debating if they can afford 1.3TB of bandwidth, Argo would charge on the accelerated 300GB of Uncached Content, $5 + $30 per month. Now instead of cost increasing from $20 to $155 per month, Pro + Entry level Argo would grow total cost from$20 to $55, and possibly provide similar performance improvements in TTFB and bounce rate.

If you are paying for bandwidth as a separate line item in your site hosting or platform, the savings from increased cache might pay for the Argo incremental cost. But if you are using plans where bandwidth is included like AWS Lightsail, AMIMOTO, LiquidWeb and other hosts with fixed plans, the cost-benefit equation needs to show the benefits to the user. These metrics could include more pageviews, more time on site, lower bounce rate, SEO search rank upgrade from speed improvements.

Conclusion

Argo is brand new and a feature exciting enough for us to perform a benchmark and speed test. At first glance it brings clear improvements to server response time, while it’s less clear how it impacts other metrics. Cost is non trivial for sites that serve lots of photos: travel blogs, food blogs, where an entry-level plan that accelerates only uncached content could make Argo affordable to a much larger user base. Using Waze to travel across a city makes sense, but why use it when content is only a block away from the user? Maybe a page rule in the Cloudflare panel could allow to just accelerate what comes from origin and accomplish that goal.

The ability to turn on Argo instantly with no installation and no change to the origin makes it a no-brainer to try and experiment directly. It will cost you some, run it for long enough to validate your test, then you will know if it’s worth it.

Your problem can be solved by adding another domain just for static content, with Argo disabled. This also gives the benefit of not sending cookies with every request. Then you can just enable Argo on your “root” site, and only pay for the un-cacheable traffic.

It’s an interesting idea, more complex than a Cloudflare page rule which would solve the problem without moving assets to another domain, but for new sites just getting going it could be efficient.

Enabling argo improved my server response time of around 500ms. Nice! But I still failed in Pagespeed Insights, because my server response time is still above 200ms T__T This is sooo frustrating T__T

Correct, Argo solves for transit speed, so core response time still matters. Do you have a cache on your origin server? You could also try Cloudflare’s Cache Everything setting, if your content is mostly static. Check https://www.firstpractica.com/192/reduce-server-response-time/. Good luck